Featured stories

Latest stories

-

5 Key Features to Look for in Secure Managed File Transfer Software

When evaluating a secure managed file transfer software, check out its security features, deployment options, logging and reporting capabilities and more. -

A Day in the Life with Software Engineer and YouTuber, Jenn Cho

In this video, Software Engineer and YouTuber, Jenn Cho inspires her subscribers with tales from her onboarding journey at a new company and her take on the benefits of the OpenEdge developer training and certification course. -

What are the Basics of Mergers and Acquisitions (M&A)?

Jeremy Segal, Executive Vice President of Corporate Development at Progress, walks us through the foundational aspects of M&A and discusses exactly what goes into an acquisition. -

Survey: 56% of Organizations Plan to Invest in Human-Centered Software Design in the Next Year

A new survey sponsored by Progress offers insight into businesses’ approaches to building human-centered software. -

How One Developer Enhanced His Job Search with OpenEdge Developer Training

Meet Octavio Antonio Huerta, a 26-year-old developer who leveraged the free Basic OpenEdge Developer Learning Path and Certification Exam to grow his ABL skills and find a job as an OpenEdge Developer. -

-

The Benefits of a Data Hub for Effective Data, Analytics and AI Governance

The successful implementation of a data governance policy imposes many obligations on a company’s IT infrastructure. In this blog, we’ll explore why a data hub architecture is a fitting approach for effectively integrating and governing complex, unstructured data in large organizations. -

Meet Jutta Westerholt, Revenue Operations Manager at Progress

Today, we shine a spotlight on Jutta Westerholt, who has been recognized for “Own Our Tomorrow, Today,” one of the ProgressPROUD core values we strive to embody every day. -

Secure Managed File Transfer Solutions: Best Practices for User Authentication and Access Control

Learn the best practices for authenticating users and granting access within you secure managed file transfer solution.

Topics

- Application Development

- Mobility

- Digital Experience

- Company and Community

- Data Platform

- Secure File Transfer

- Infrastructure Management

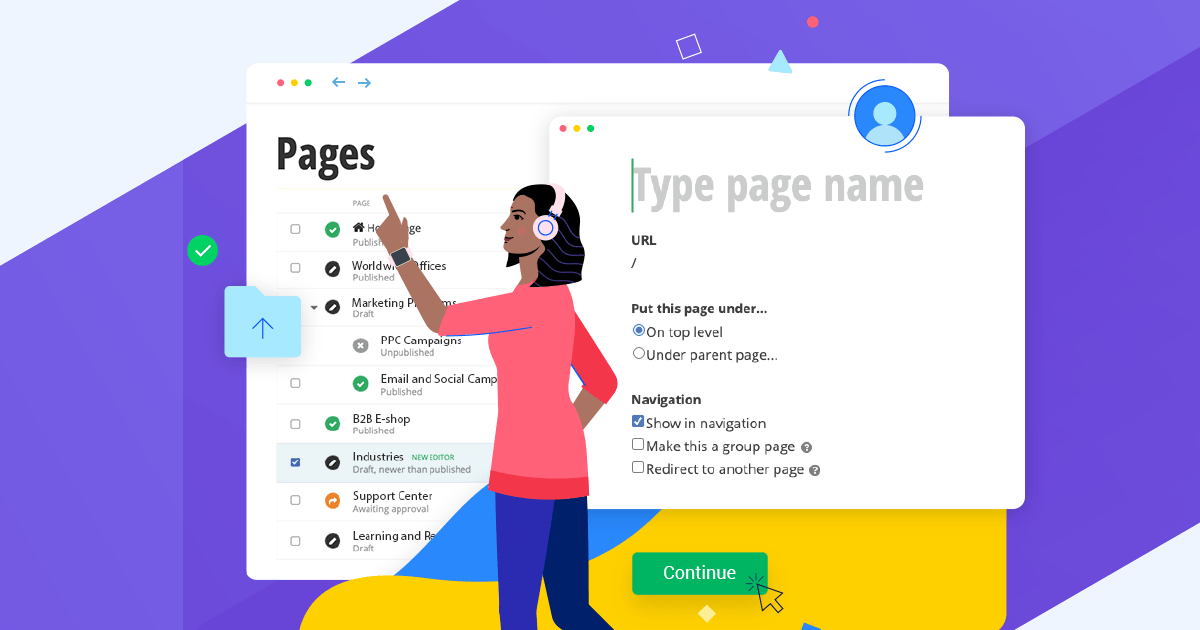

Sitefinity Training and Certification Now Available.

Let our experts teach you how to use Sitefinity's best-in-class features to deliver compelling digital experiences.

Learn MoreMore From Progress

MOST POPULAR

SALESFORCE

Latest Stories

in Your Inbox

Subscribe to get all the news, info and tutorials you need to build better business apps and sites

Progress collects the Personal Information set out in our Privacy Policy and the Supplemental Privacy notice for residents of California and other US States and uses it for the purposes stated in that policy.

You can also ask us not to share your Personal Information to third parties here: Do Not Sell or Share My Info

We see that you have already chosen to receive marketing materials from us. If you wish to change this at any time you may do so by clicking here.

Thank you for your continued interest in Progress. Based on either your previous activity on our websites or our ongoing relationship, we will keep you updated on our products, solutions, services, company news and events. If you decide that you want to be removed from our mailing lists at any time, you can change your contact preferences by clicking here.

.png?sfvrsn=f50b9a92_0)